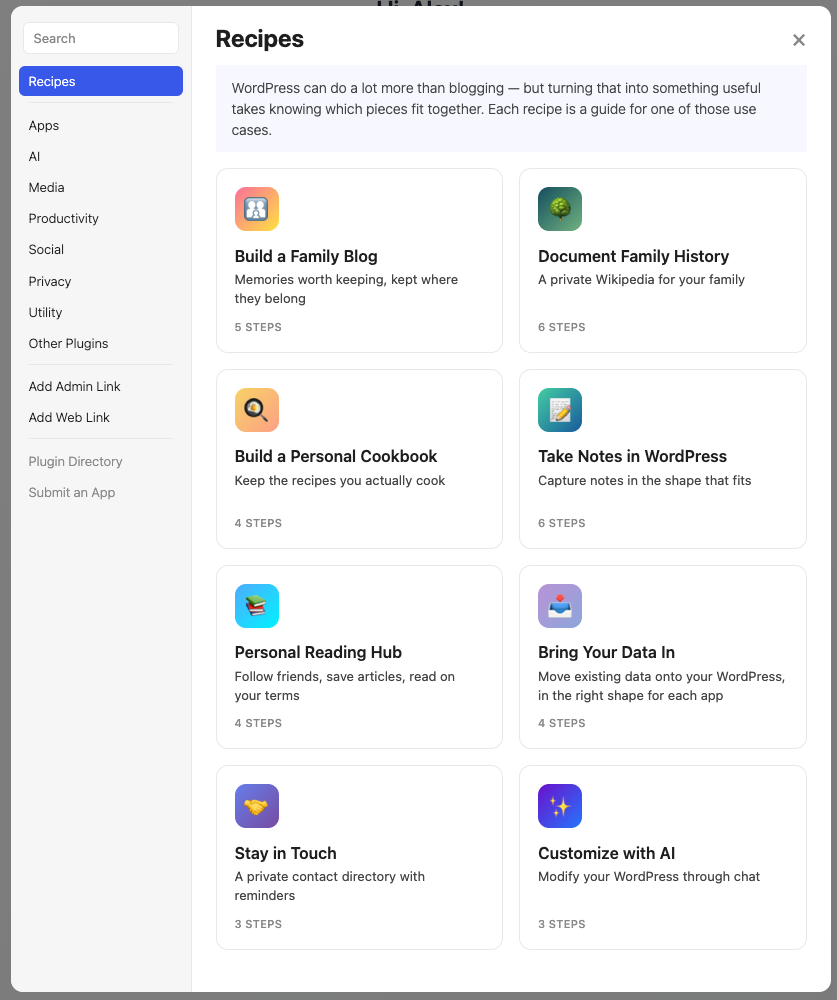

AI makes personal software easier to create, but it still needs a place to live. I see WordPress as an unexpected but practical platform for personal apps and data.

AI makes personal software easier to create, but it still needs a place to live. I see WordPress as an unexpected but practical platform for personal apps and data.

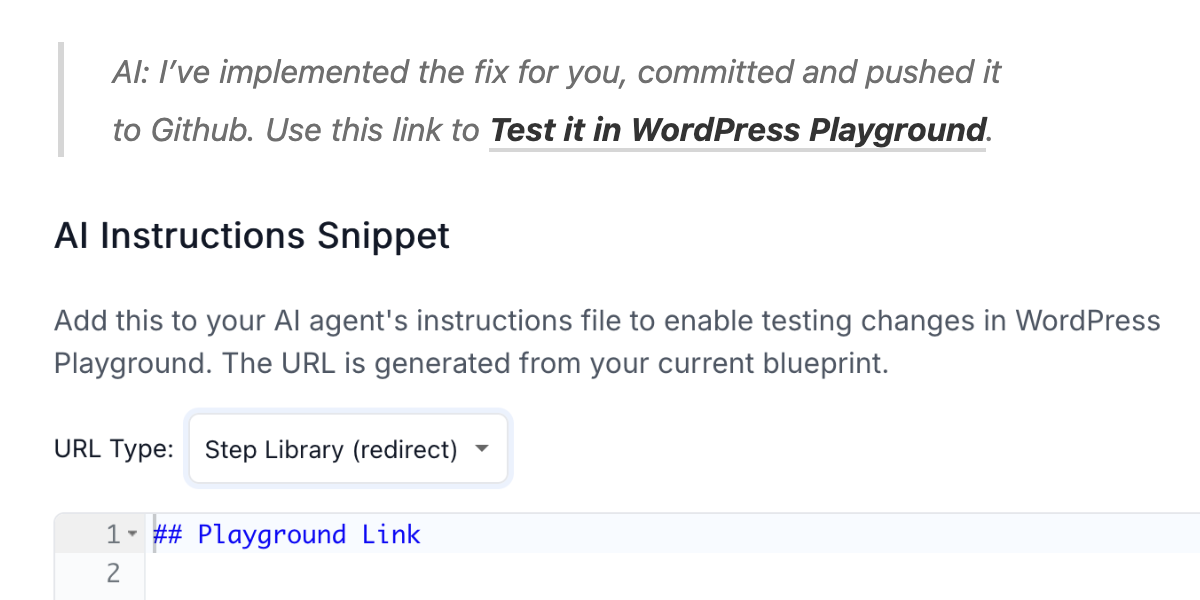

With AI coding assistants and WordPress Playground, you can now develop WordPress plugins from your phone. Here’s how to set it up.